Card Efficiency Analytics Explained for Delivery Teams

A delivery-lead guide to card efficiency analytics: estimated vs logged time at the card and assignee level, how to read over- and under-runs, and what to change next sprint.

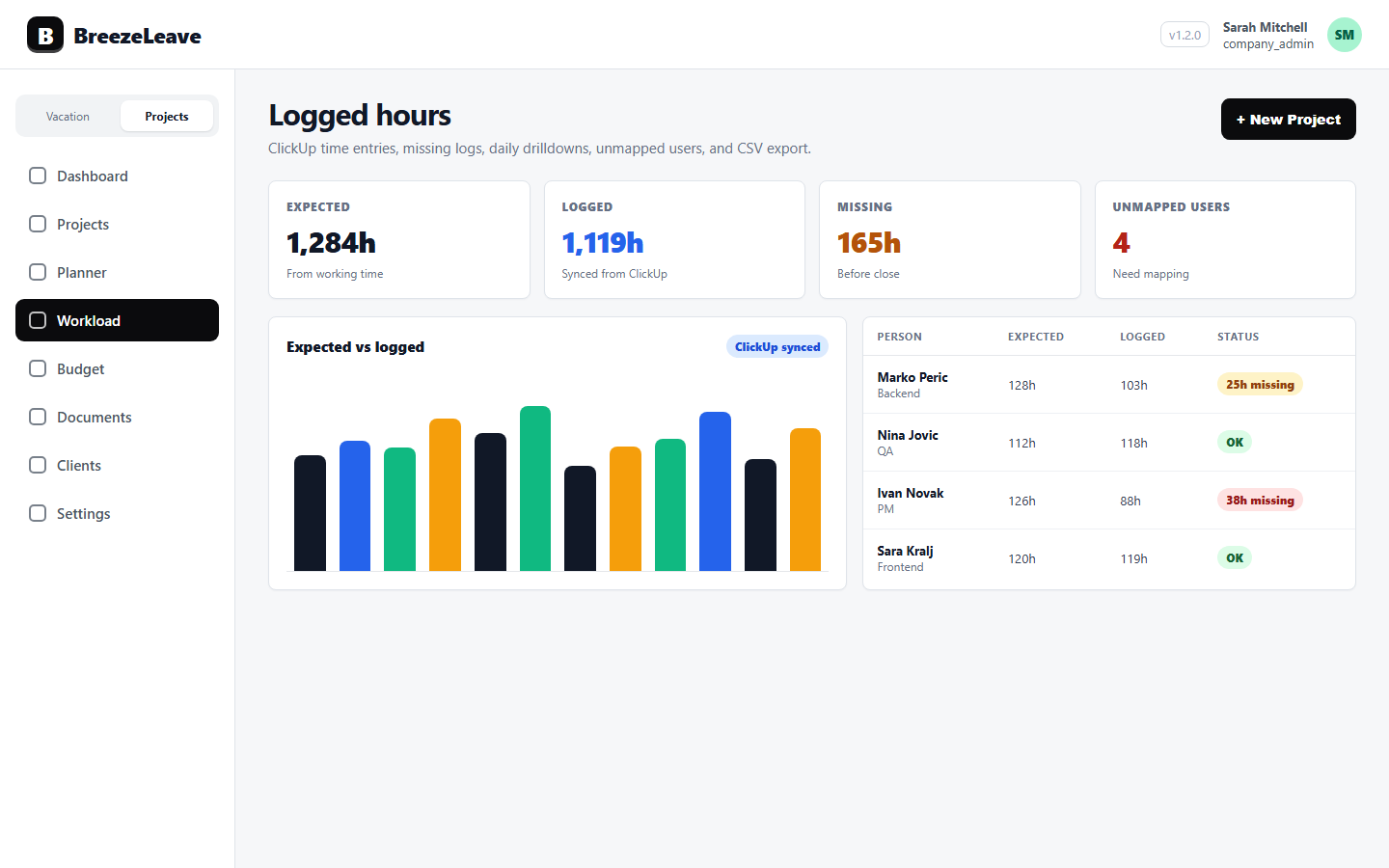

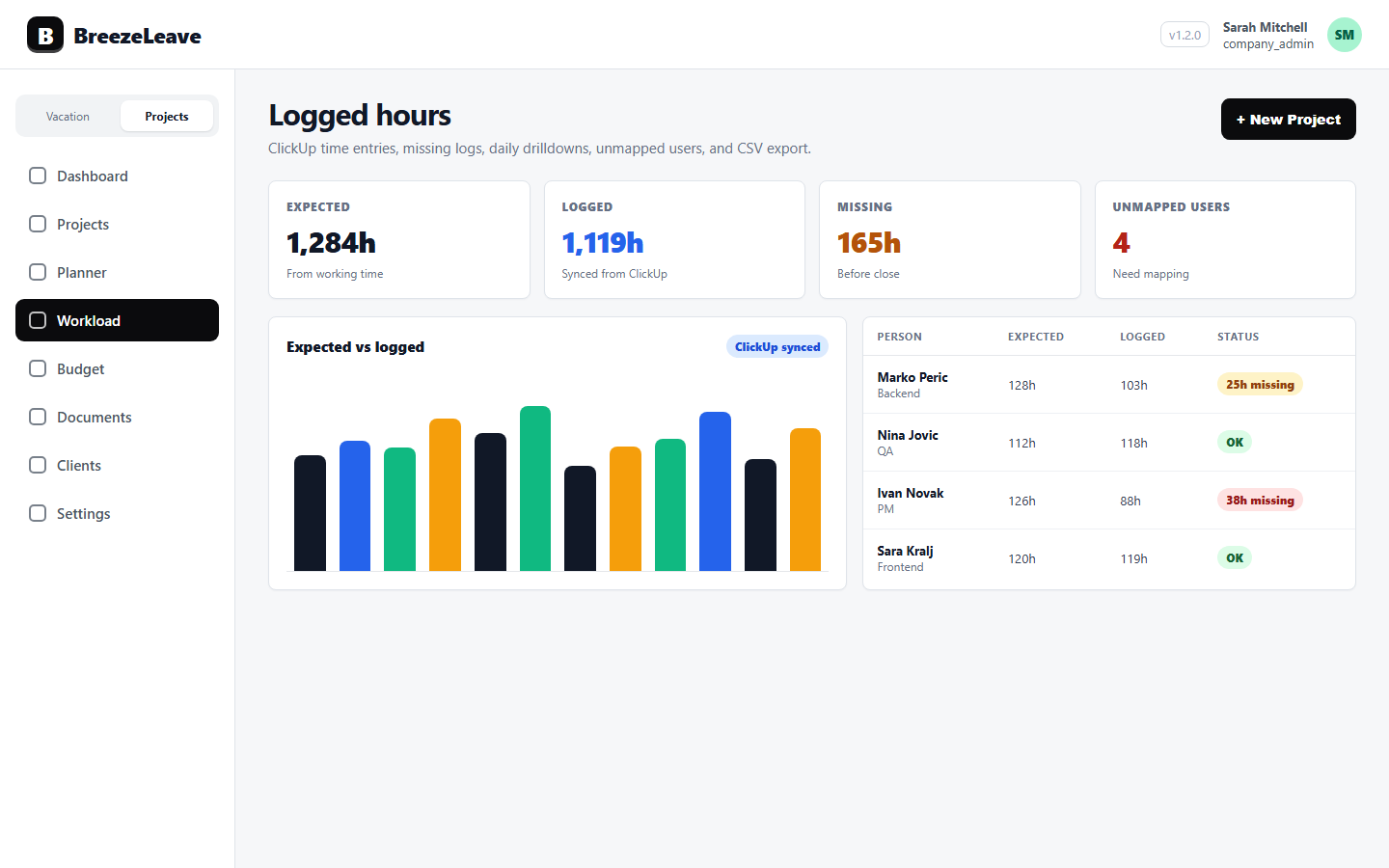

A delivery lead opens the sprint retro on a Tuesday afternoon. The standup notes look clean: tickets moved, cards closed, nothing on fire. Then the ClickUp time entries get pulled in and the picture changes. A card estimated at 4 hours took 14. Another estimated at 2 days closed in 30 minutes. One contractor logged 22 hours across cards with no estimate at all. The retro stops being a victory lap and starts being a conversation about why estimates and reality drifted by a factor of three. Card efficiency analytics is the report that surfaces this conversation before the next sprint commits to more of the same.

This article is for the delivery lead trying to read card efficiency as a planning signal, not as a performance review. It walks through what the ratio measures, where it misleads, and how to use it to change the next planning conversation.

What card efficiency measures

Card efficiency is the ratio of estimated time to logged time per ClickUp task. The BreezeLeave integration computes it at two levels:

- Per card. Estimated hours on the ClickUp card vs the sum of time entries logged against it.

- Per assignee. Across all cards a person owns in a period, the average ratio between estimated and logged.

A ratio of 1.0 means estimate equals reality. Below 1.0 means logged more than estimated (an over-run). Above 1.0 means logged less (an under-run). Both directions matter, for different reasons.

Reading over-runs as a planning question

An over-run is a card that took more than was estimated. The instinct is to ask the assignee why. That is usually the wrong first question. Five things cause over-runs more often than effort:

- The estimate was wrong. The card scope was understood differently by the estimator and the executor. This is the most common case.

- Scope crept. The card grew and was never re-estimated. A "small change" turned into a refactor.

- Dependencies blocked the work. The assignee logged time waiting on something, or doing a workaround.

- The card was actually two cards. One task should have been split.

- The work needed unplanned rework. QA bounced it back, or the design changed mid-build.

The first conversation about an over-run is with the planner, not the executor. Was this card estimated by someone who has done that type of work? Was the scope written clearly enough to estimate against? If both answers are yes, the next conversation is about what changed between planning and delivery.

Reading under-runs is harder than it looks

An under-run is a card that closed faster than estimated. That sounds like good news, and sometimes it is. Three patterns are worth investigating:

- The estimate was padded. Recurring under-runs by the same estimator suggest the planning buffer is too generous, which makes the team look slower than it is.

- Time was logged elsewhere. The assignee did the work on the right card but logged the time to a different card. The card looks fast; the wrong card carries the time.

- The card was skipped or partially done. The assignee closed the card because it stopped being relevant, but the closure looks like efficient delivery.

The third case is the one that hurts the client. If a card closes before the work is complete, the retainer report still shows the estimated revenue but the delivered value is lower. Drill into a few under-runs every sprint to confirm the cards closed with work done, not with work dropped.

Per-assignee efficiency, used carefully

The per-assignee view shows the average ratio across all cards a person worked on in a period. It is tempting to read this as a productivity score. That reading is wrong most of the time, and using it as a score corrodes the team's willingness to log time honestly.

Better questions to ask the per-assignee view:

- Are the cards this person owns being estimated by the right people? A junior owner working from a senior's estimate often runs over.

- Is this person consistently working on a card type whose estimates are off across the team? That points at a planning calibration issue, not a person issue.

- Is the person picking up many small, unestimated cards? Their logged hours will look high against the small estimated hours that exist.

| Per-assignee signal | What it might mean | What to change |

|---|---|---|

| Persistent over-runs | Estimate source mismatched with executor | Estimate with the person who will do the work |

| Persistent under-runs | Time logged elsewhere or padding | Drill into a few cards; check the close note |

| Wild swings | Mixed card types or scope creep | Split the view by card type or project |

| High unestimated time | Cards without estimates dominate the queue | Make estimates required for new cards |

Card types and why aggregation matters

A 30-minute card and a five-day card behave differently when you average their efficiency ratios. A 60-minute over-run on the 30-minute card is a 3.0 ratio; the same absolute over-run on the five-day card is barely a blip. Averaging both into one number hides what is happening.

Read the report sliced by card size or card type when possible. BreezeLeave's logged hours view supports filters by ClickUp project, list, and assignee. Filtering pulls the analysis into apples-to-apples comparisons:

- All design cards from the past sprint.

- All QA cards from the past quarter.

- All cards above 8 estimated hours from a single project.

Each slice tells a different story. The design slice might be calibrated well; the QA slice might show consistent under-runs that point at QA time being logged to dev cards. The unsliced report would hide both.

How card efficiency feeds capacity planning

If estimates run 30% over consistently, capacity planning needs to account for that. Booking a team to 100% of estimated hours when the calibration ratio is 1.3 means the team will deliver 75% of what was promised. Two options:

- Adjust estimates upward. Re-baseline future estimates to match the calibration ratio. The team commits to fewer cards per sprint but hits the ones it commits to.

- Adjust planning capacity downward. Plan to 80% of theoretical capacity instead of 100%. The estimates stay the same; the planned slot count drops.

Both work. The first is honest with the client. The second is honest with the team. A delivery lead who runs both for a quarter usually settles on a mix. For the broader capacity-planning context, see project capacity planning for agencies.

When card efficiency is not the right signal

A few scenarios where the report misleads more than it helps:

- Research and exploration work. If the goal of the card is to find out how long something will take, the estimate is provisional by design. An over-run is not a failure.

- Cards without estimates. A ratio against zero is undefined. These cards show up separately; do not roll them into the per-assignee average.

- Pair work. Two assignees on one card produce double the logged time. Make sure the report can distinguish pair work from solo work for cards where it matters.

- Discovery sprints. Early-stage work where the scope is still being defined runs over by definition. Card efficiency is a steady-state metric.

Use the report where it fits. Skip it where it does not. A delivery lead who chases a 1.0 ratio for research work is optimizing the wrong number.

A small monthly routine

Once a month, take 30 minutes for card efficiency. Steps:

- Pull the past 30 days of card efficiency, filtered by project.

- List the five worst over-runs. Drill into each. Note the cause.

- List the five biggest under-runs. Drill into each. Confirm the work shipped.

- Note the per-card-type calibration ratio.

- Decide one planning change for next sprint.

One change per month is enough. Re-estimating a card type, splitting a recurring large card, requiring estimates on QA work, or moving the estimation conversation to the executor. Small, repeated changes compound faster than a single large initiative.

Before any of this is useful, the underlying time entries have to be clean. The hygiene routine in the ClickUp logged-hours hygiene checklist is the precondition. Card efficiency on bad data is worse than no data at all.

Quick reference: how to read the card efficiency report

- Confirm the time entries it reads are clean (hygiene routine first).

- Filter to a card type, project, or sprint to compare like with like.

- Read over-runs as planning questions, not execution questions.

- Drill into under-runs to confirm cards closed with work done.

- Use per-assignee data to ask team-shape questions, not individual ones.

- Feed the calibration ratio into next sprint's planning.

- Skip the report for research, discovery, and unestimated work.

Card efficiency is one signal among several. Used carefully, it helps a delivery team plan more honestly. Used as a performance scorecard, it breaks the trust that makes the time entries truthful in the first place. To start reading card efficiency for your team, head to pulling ClickUp time into BreezeLeave.