A 220 person legal services firm headquartered in Frankfurt has a data-residency clause in every client contract. Employee leave data is personal data under the GDPR, which means the firm needs to know where the database sits, who reads the backups, and how long the audit log is retained. The IT lead has already vetoed three SaaS HR tools because the vendor would not commit to keeping the data inside a specific EU jurisdiction. The HR director wants a leave product that does not feel like a step backward from the cloud apps the team already knows. Self-hosted BreezeLeave is the answer to that pairing.

Self-hosted is not the default deployment for BreezeLeave. The cloud version covers most teams without the operational overhead of running your own stack. The self-hosted option exists because some organizations cannot use a multi-tenant SaaS at all, or can only use one if the data stays inside infrastructure their security team controls. This page covers when the self-hosted choice makes sense, what ships in the box, and what the customer is on the hook for.

When self-hosted is the right choice

The decision is not about preference. It is about constraints that a cloud product cannot satisfy. Three patterns drive almost every self-hosted conversation.

- Data residency commitments. Client contracts, regulator guidance, or internal policy require employee data to live in a specific jurisdiction. EU companies are the most common, but the same logic applies to Swiss firms outside the EU adequacy decision and to Canadian provincial-government suppliers.

- Network-isolated environments. Healthcare, defense, and some financial institutions run their internal tooling inside isolated networks. They need a leave product that runs entirely inside that network, with whatever outbound integration policy their security team approves.

- Bring-your-own-key encryption and full audit control. Some compliance frameworks require the customer to hold the encryption keys protecting personal data and to retain audit logs in storage the customer controls. A self-hosted deployment is the straightforward way to satisfy both.

If none of those constraints apply, cloud is the better answer. Self-hosted adds operational responsibility (backups, patches, certs, monitoring), and that responsibility does not pay off unless one of the constraints above is real.

What ships with self-hosted

Self-hosted BreezeLeave is the same application as cloud, packaged for deployment on infrastructure you control. The release artifacts are:

- Signed Docker images. NestJS backend, React/Vite frontend served by nginx, plus the supporting workers. Tagged by version so you can pin to a known release.

- docker-compose template. A reference stack that wires the backend, frontend, PostgreSQL, and Redis together. You can deploy this template as-is for a single instance, or use it as the basis for a Kubernetes or ECS rollout.

- Environment-variable documentation. Every configurable surface (JWT secret, database URL, Redis URL, SMTP credentials, OAuth client IDs, allowed origins) is documented with examples. The documentation flags which variables are required at boot and which can be added later as you enable integrations.

- Upgrade scripts. Database migrations are bundled with each release and run idempotently. The upgrade script handles schema changes, seed-data updates, and any version-specific cleanup. You can run the migration step on a staging clone before pointing production at the new image.

- Health endpoints. Liveness and readiness endpoints for your monitoring stack, plus a metrics endpoint your security team can scrape into the internal Prometheus or equivalent.

What you bring

The customer owns everything below the application boundary. Specifically:

- Compute. A host or set of hosts that can run Docker. For a 200 person company, a single VM with four vCPUs and 8 GB of memory covers the backend and frontend containers comfortably. Larger deployments split the database onto its own host.

- PostgreSQL. The application targets PostgreSQL 14 or newer. You can run it inside the same Docker compose stack, or point at a managed Postgres (RDS, Azure Database, Cloud SQL) in your account.

- Redis. Used for session state and the background-job queue. A small single-node Redis is usually enough.

- SSL certificates and TLS termination. nginx ships in the stack, but you decide where TLS terminates. Most deployments put a load balancer in front (ALB, NLB, Azure Front Door, GCP Load Balancer, or a host nginx) and let the LB hold the certs.

- Backup and disaster recovery. Database snapshots, restore drills, and off-site copies are the customer's responsibility. The application has no built-in backup orchestration; that is intentional, because every customer's backup tooling is different.

- Monitoring and alerting. Health endpoints are exposed; what you do with them depends on your stack. Common pairings: Prometheus plus Grafana, Datadog, CloudWatch, or the internal observability platform.

- Network security. Firewall rules, VPC configuration, WAF (if your policy requires one), and the OAuth callback URLs for the integrations you enable.

Setup overview

Initial setup is sales-led. After the order form is signed, the customer receives the image repository credentials, the docker-compose template, and a setup runbook. The high-level sequence is:

- Provision the host, database, and Redis according to your sizing.

- Pull the Docker images for the version on the order form.

- Populate the environment file with secrets, OAuth client IDs, and SMTP credentials.

- Run the database migration script against an empty database.

- Start the stack and confirm the health endpoints return healthy.

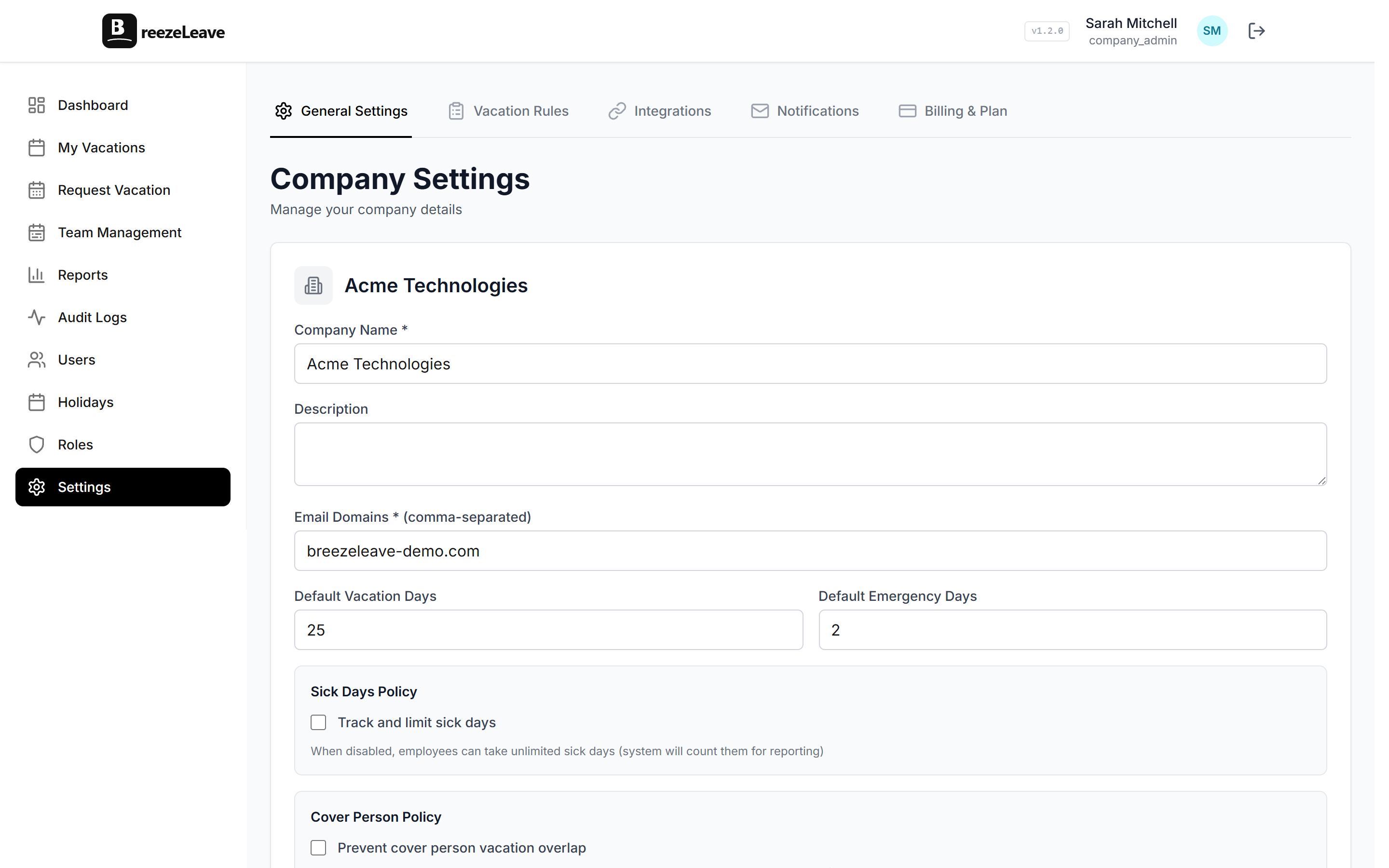

- Open the application, sign in as the seeded admin user, and walk through the first-time setup wizard.

- Enable integrations one at a time, verifying each callback URL.

For the operational checklist that pairs with this overview, see the self-hosted leave management implementation checklist. It covers the infrastructure decisions, backup cadence, security review, and day-one verification an IT lead needs before kickoff.

Same product, different deployment

Feature parity is the design goal. The self-hosted instance runs the same backend code as the cloud instance, so every feature on the cloud roadmap is available the moment it ships, provided the customer pulls the new image. That covers:

- JWT authentication. The same authentication flow as cloud. You can plug the application into your IdP for SSO once that is configured on the order form, or run it with built-in email and password.

- Slack and Microsoft Teams. Bot tokens, slash commands, approval cards, and digest channels all work the same way. The only deployment-specific concern is that Slack and Teams need to reach your callback URL, so the host has to be addressable from the public internet (or from the integration provider's IP range, if you allowlist).

- Google Calendar, ClickUp, GetAccept. Identical OAuth flows. Each integration has its own callback URL that the customer registers in their own provider tenant.

- Email delivery. SendGrid is the cloud default. Self-hosted swaps that for your SMTP host of choice. The application supports both with the same notification settings UI.

- Reports, audit logs, multi-company, project operations. All the same. The audit logs surface and the role-based permissions system work the same way in self-hosted as in cloud, which is the part that matters most to security reviewers.

Updates and support cadence

Cloud users get updates as they ship. Self-hosted users decide when to pull a new image. Most enterprise customers stage updates: pull into staging on a monthly cadence, run the upgrade script against a clone of the production database, run the regression checklist, then schedule the production cutover during a low-traffic window.

Each release ships with notes that cover:

- The image tags for each container in the stack.

- Any database migration steps the upgrade script will run.

- New or removed environment variables and whether they have safe defaults.

- Integration-side changes that require re-issuing tokens.

- Known compatibility issues with older versions.

Enterprise support covers the application: the support contact takes incident reports, coordinates root-cause analysis on application bugs, and helps with the upgrade scripts when the database migration step needs hand-holding. The customer's infrastructure team retains responsibility for the host, the network, and the database.

When to stick with cloud

Self-hosted is a real commitment. If the constraints below describe your situation, cloud is a better fit.

- Team under 100 people with no specific compliance constraint. The cloud version covers the feature set without the operations overhead.

- No internal capacity for Docker operations. Self-hosted assumes someone on the customer side can manage container deployments, database backups, and SSL renewals. If that capacity does not exist, the operational burden will outweigh the compliance benefit.

- Want auto-updates and faster access to new features. Cloud customers see features the day they ship. Self-hosted customers see them when they pull the new image, which is usually a few weeks behind.

- No requirement to host in a specific region. If the cloud region the vendor offers already satisfies your residency policy, self-hosted is solving a problem you do not have.

Honest tradeoff

A self-hosted deployment is the right answer for a small set of customers and the wrong answer for most. The sales conversation starts with the constraint that forced you to consider it. If we cannot identify a hard constraint, the recommendation is cloud and the conversation ends faster than you might expect.

Where it fits and where to read more

Compliance-heavy industries are the natural fit. The law firms page covers the audit-trail and access-control angle that comes up most often in legal-services deployments. The nonprofits page covers the data-stewardship side for organizations that handle donor or beneficiary data alongside employee data. Pricing for the Enterprise plan, including self-hosted as a deployment option, is on the pricing page.

Self-hosted setup is sales-led. To start the conversation, head to the pricing page and use the Enterprise contact button. The sales team will walk through the compliance constraints, the sizing, and the SLA terms before any infrastructure work begins.

Frequently asked questions

Everything you might want to know before getting started. Still have questions? Reach out anytime.

Yes. The Docker images are agnostic about where they run. The most common deployments are AWS, Azure, GCP, and OVH in EU regions, plus on-prem VMware and bare metal for organizations with strict isolation requirements. As long as the host can run Docker (or Kubernetes via a compose-to-helm translation), the application stack is portable. The only outbound traffic the application initiates is to the integration endpoints you choose to enable (Slack, Microsoft Teams, Google APIs, ClickUp, GetAccept, and your chosen SMTP host).

All of them. Slack, Microsoft Teams, Google Calendar, ClickUp, and GetAccept use the same OAuth and webhook flows in self-hosted as in cloud. The one piece you configure differently is the public callback URL: the integration provider needs to reach your instance, so the host has to be addressable from the integration provider over HTTPS. If your VPC is fully air-gapped, integrations that require an inbound webhook (Slack interactions, ClickUp webhooks) will not function. Outbound-only flows (email, calendar push, ClickUp polling) still work in an air-gapped setup.

BreezeLeave publishes signed Docker images on a regular cadence. The release notes list the new image tags, any required environment-variable additions, and any database migration steps. You pull the new images on your own schedule. There is no auto-update. The upgrade scripts handle database migrations idempotently, so you can run them as part of a staged rollout (staging first, then production) without ordering surprises.

Enterprise self-hosted customers get a named technical contact, an SLA on incident response, sales-led initial setup, access to the private upgrade documentation, and a security review channel for compliance questions. The exact SLA terms (response time, resolution targets, on-call coverage) are written into the order form so they are not ambiguous. Support is bounded to the application itself; the customer remains responsible for the underlying infrastructure.

Yes. EU data sovereignty is one of the most common reasons companies pick self-hosted. You can host the application, the PostgreSQL database, and the Redis cache in an EU region of your choice. No application data leaves the host you run on. Integration providers (Slack, Google, Microsoft) still receive the data you send them through their APIs, which the customer governs through their own DPAs with those providers.

Yes. Audit logging is part of the core application, so self-hosted instances produce the same append-only audit trail as cloud. Retention is configurable; you decide whether to keep logs for one year, three years, or longer, and you decide where to forward them (a downstream SIEM, an internal log lake, or just inside the application database).

Self-hosted is included as a deployment option on the Enterprise plan, not as a separate price. The per-user rate is the same as the cloud Enterprise price ($4 monthly or $3 annual). Sales handles the order form, the SLA terms, and the initial setup. There are no extra licensing fees for the Docker images themselves; the seat count drives the contract.